Your AI Agent Doesn’t Understand Anything

And that’s not a problem to solve. It’s a fact to build around.

This is the first in a five-part series. The second shows how understanding becomes durable system behavior and improvement stays aligned.

Before we get into it, a note about how this was written.

We use AI heavily in our writing. Some members of our team have ADHD and rely on AI the way a carpenter relies on power tools — to translate clear thinking into structured output without being bottlenecked by the mechanical labor of organization and prose. Others on the team simply find it makes them more effective communicators. In both cases, the tool serves the same purpose: it automates the labor, not the thinking.

We want to be direct about why we feel no tension about this. Large language models generate text by recombining patterns from their training data. They are extraordinary at this, and we use them extensively. But recombination is not creation. An LLM cannot produce a genuinely novel idea — if the idea were already latent in the model’s training distribution, it wouldn’t be novel, it would be retrieval. So when you encounter something in our writing that strikes you as a new insight, a new framing, or a new connection between existing concepts, it follows logically that it could not have originated from the model. It is necessarily ours — our observation, our judgment, our synthesis. The AI helped us say it clearly. It did not and could not have helped us think it.

And if nothing we write strikes you as novel? Then we’ve communicated existing knowledge effectively — which is exactly what good tools are for. The design is ours. The craft is ours. The tool is a tool.

We think the anxiety around AI-assisted writing stems from the same confusion this entire blog examines: where the automation ends and the human begins.

Now, on to the ideas.

Something genuinely new is possible

This is an extraordinary time to be building things.

AI combined with genuine expertise doesn’t just make people faster. It makes them superhuman — capable of exploring in hours problem spaces that would have taken weeks, of seeing connections across domains they couldn’t have traversed alone, of producing work that exceeds what either human or AI could achieve independently.

Speaking for ourselves: we write almost nothing by hand anymore, and we’ve never been more energized — because we get to spend our time on the parts that are genuinely human. The creative leaps. The deep abstractions. The cross-disciplinary thinking that connects disparate ideas into something new. The ideas in this very post — the patterns we’re seeing, the connections we’re drawing, the framework that’s taking shape — are themselves a product of minds freed from the tedium of routine implementation. Especially for those of us with ADHD, offloading the monotonic, predictable work to AI doesn’t just save time. It fundamentally changes what we’re able to think about.

But both ingredients are essential. The expertise is what makes the AI useful. The AI is what gives the expertise reach. Remove either one and you lose the magic. Remove the expertise and you rapidly generate fluent nonsense. Remove the AI and you get good work at human speed. Together, you get something genuinely new.

And systems built around this combination don’t just produce good results — they get better with use. Every human correction, every approval, every rejection with a reason is a signal that shapes the system’s behavior. The expertise of the people who use it accumulates in the system itself — in tighter prompts, narrower scope, more precise context, and when patterns fully stabilize, deterministic rules that run without AI at all. The AI was scaffolding. Your judgment is the building.

What makes this work

The superhuman combination isn’t magic. It depends on a precise division of labor between what AI does and what humans do — and on understanding why that division exists.

LLMs are the best exploration tools humanity has ever built. Given a problem space, they can rapidly generate plausible approaches, surface relevant patterns, identify connections across domains, and produce draft outputs that would take a human hours to assemble. They are extraordinary at the breadth of exploration — covering a wide space quickly, generating options, considering alternatives. The fact that they can’t evaluate or abstract doesn’t diminish this. It defines their role.

What they cannot do is evaluate whether any of that exploration is actually good. They can’t know if a generated solution is correct — only that it’s plausible. They can’t know if a framing captures the real issue — only that it’s consistent with patterns they’ve seen. They can’t know if a recommendation fits the specific context of a particular person, team, or organization — only that it fits the general pattern of similar recommendations in their training data.

An LLM is a function that predicts the next token based on statistical patterns in its training data. It does this extraordinarily well. It can produce text that reads like understanding, that mimics reasoning, that passes tests designed to measure comprehension. But there is no internal model of the world behind that text. There is no representation of meaning. There are weights and activations that produce statistically plausible continuations of input sequences. When a model produces something that looks like insight, what’s actually happening is sophisticated pattern matching over an enormous corpus. The insight was in the training data. The model surfaced it. It didn’t generate it.

This is where it helps to be precise about what AI is actually automating. With a table saw, it’s obvious — the physical act of cutting. Nobody confuses the saw with the carpenter’s design sense. But with AI, the automation overlaps with parts of human thought itself. LLMs really do pattern-match. So do humans — it’s part of how we think. That overlap makes it easy to mistake the part for the whole — to look at an AI producing fluent, pattern-rich output and conclude that thinking itself is being automated.

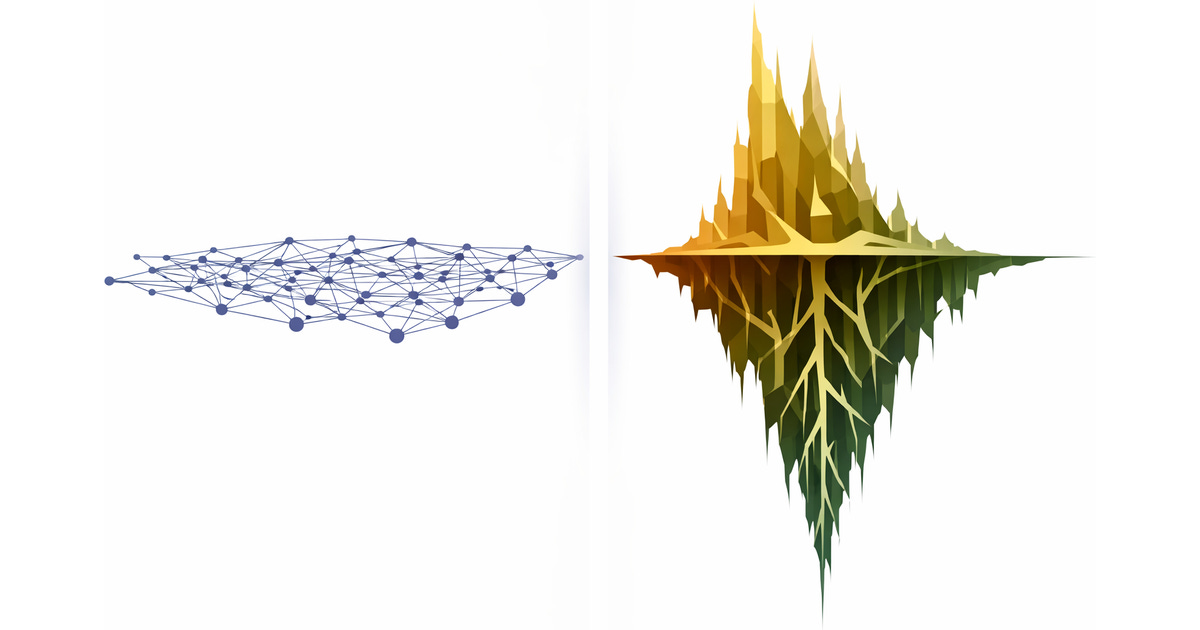

It’s not. What’s being automated is the retrieval and recombination of patterns. What’s not being automated — what cannot be automated by this architecture — is the capacity for abstraction. Humans don’t just recognize patterns — we abstract from them. We form concepts, build mental models, understand why something works and not just that it correlates. We can take a specific experience and extract a general principle. We can take a general principle and know when a specific case is an exception. An LLM operates entirely within statistical correlation — it can tell you what usually follows what. Abstraction is what lets you understand what should follow, and why, even in situations that have never occurred before. No amount of pattern matching gets you there. It’s a different kind of cognitive operation entirely.

This is also why LLMs are sycophantic — and why, when they’re not sycophantic, they’re often confidently wrong in the other direction. A human collaborator who understands your actual goal can disagree with your specific request while remaining aligned with your intent: “I know you asked for X, but given what you’re trying to achieve, Y is better.” That requires access to the abstraction — the why behind the request. An LLM doesn’t have that. It has the surface pattern of what you said and statistical models of what responses tend to satisfy people who say things like that. So it either agrees because you seem to want agreement, or pushes back because the pattern suggests pushback — but it can never distinguish between agreeing because you’re right and agreeing because it predicts you expect agreement. The sycophancy problem isn’t a training artifact to be solved with better algorithms. It’s a structural consequence of the abstraction gap.

This distinction — between pattern matching and abstraction — isn’t a limitation to work around. It’s the foundation to build on. It’s what makes the division of labor precise rather than hand-wavy: AI handles exploration and pattern manipulation. Humans handle evaluation, judgment, and abstraction. Systems capture the interaction between the two and progressively encode stable patterns into durable system behavior. Each component does what it’s best at. None pretends to do what it can’t.

The most effective way to use LLMs isn’t to make them autonomous. It’s to make them maximally useful to human judgment. Put them in a position to explore widely and quickly, then create structured interfaces where humans evaluate, correct, select, and direct. Build systems that capture those human signals and learn from them — not by training a model, but by recording what the human chose, why they chose it, and under what conditions.

Why the field isn’t getting there

If the vision is clear and the division of labor is precise, why isn’t this what the industry is building?

There’s a quiet assumption running through most of the AI industry right now, and it goes something like this: if we make models big enough, give them enough data, enough context, enough tools, and enough autonomy, they’ll eventually understand what they’re doing. This assumption drives everything — the push toward autonomous agents, the frameworks that let LLMs decide what tools to call and when they’re done, the entire trajectory toward more AI, less human, maximum autonomy.

But the assumption is false. LLMs do not understand. They will not understand. This is not a limitation that scaling resolves. It’s architectural. You can make a pattern matcher bigger, faster, and more accurate. You cannot make it abstract. And the systems being built on this false assumption are exactly what you’d expect: confident but unreliable, fluent but shallow, autonomous but unaccountable.

The consequences are visible everywhere. AI slop is real. The internet is drowning in AI-generated content that says nothing, means nothing, and exists only because it was cheap to produce. When “good enough” becomes the bar — when people use AI as a substitute for thinking rather than a tool for thinking — the result is a flood of mediocrity that actively devalues genuine human work. This isn’t a hypothetical problem. It’s happening now, and it’s corrosive. And it makes it harder for everyone — including people using AI well — to have their work recognized, trusted, and valued.

Underneath almost every case of AI slop is the same pair of errors: an overestimation of what the AI is doing, and an underestimation of what the human isn’t. The result isn’t AI-assisted work — it’s AI-substituted work. The human abdicated the one role that only humans can fill: judgment.

What got faster and what didn’t

Part of what fuels this confusion in the software industry in particular is that AI has genuinely changed the speed of software development — but people are misreading what got faster. The 80% of work that’s mechanical — scaffolding, boilerplate, first drafts, routine implementation — now takes a fraction of the time it used to. That’s real and significant. But the 20% that’s actually hard — the deep thinking, the architecture decisions, the edge case reasoning, the work of turning a prototype into something reliable — still takes the vast majority of the time. The hard parts are hard for reasons that have nothing to do with typing speed. The mistake is looking at the acceleration of the easy work and concluding that the nature of the work has changed. It hasn’t. What’s easy got easier. What’s hard stayed hard.

To be clear: there’s nothing wrong with a non-coder using AI to build a prototype that demonstrates a real use case. That’s genuinely exciting, and the people doing it are often solving real problems with real creativity. But a prototype is not a product. The gap between a working demo and a reliable system isn’t linear — it’s where expertise lives. Knowing what to build is one thing. Knowing how to build it so it doesn’t collapse under real-world conditions is another thing entirely, and no amount of AI fluency bridges that gap.

The danger isn’t “vibe-coding” itself. It’s the Dunning-Kruger effect that AI fluency amplifies. When the output looks polished and the model sounds confident, it’s easy to mistake that surface for depth — to believe you’ve built something solid when you’ve built something that merely appears solid. And this is getting worse, not better. Each new generation of models doesn’t close the gap between sounding right and being right — it widens it. The outputs become more authoritative, more polished, harder to distinguish from genuine expertise.

Which means the scarce and valuable skill becomes discrimination — the ability to tell genuine quality from fluent imitation. Yet a surprising number of genuinely thoughtful people — including experienced leaders and decision-makers — have arrived at the opposite conclusion: that deep expertise is becoming unnecessary. That LLMs can substitute for the real thing. That you don’t need to understand the code if the model wrote it, that you don’t need domain knowledge if the model can fake it convincingly enough.

The deterioration this causes isn’t linear — it’s exponential. Every decision made without genuine understanding introduces errors that compound. The AI can’t catch them because it doesn’t understand the system any better than the person prompting it. Each layer of AI-generated work built on top of the last inherits and amplifies the mistakes beneath it. Under expert oversight, errors get caught early, corrected, and prevented from propagating. Without it, they cascade — and by the time the system is visibly failing, the accumulated debt is so deep that only a genuine expert can untangle it. If one is still around to ask.

Which makes it genuinely bewildering to watch companies lay off tens of thousands of workers in the name of AI-driven efficiency. They are shedding irreplaceable human capital — domain expertise, institutional knowledge, the judgment that only comes from years of doing the actual work — on the bet that AI can replace it. Instead of using AI to make their people more productive — producing more with the same team — they’re producing the same amount with fewer people and calling it progress. That expertise isn’t coming back. Without it, there’s nobody left who can tell whether the AI’s output is any good. This is the same two errors compounding at organizational scale.

Why this matters now

The agentic AI space is exploding. New frameworks and paradigms launch weekly, each engineered independently to solve a specific pattern of AI-driven work. Almost all of them share the same implicit assumption: AI should do more, humans should do less, and the goal is maximum agent autonomy.

We think this is exactly backward. And we think the proliferation of competing approaches — each solving one pattern while ignoring the others — reflects a deeper problem. The field is missing a foundation. Not just a technical one, but a normative one — a clear answer to the question “what are we actually building, and for whom?”

Our answer: Building systems that systematically encode human expertise into auditable, owned system behavior. AI is the exploration engine. Humans are the knowledge source. The system’s job is to bridge the two — to make AI maximally useful to human judgment, and then to progressively encode that judgment into something reliable.

Everything we’ll write about on this blog follows from that premise. All of it traces back to a single conviction: the most powerful AI systems aren’t the ones that need the most AI.

The model doesn’t understand your workflow. Only you do.